WorldReasonBench

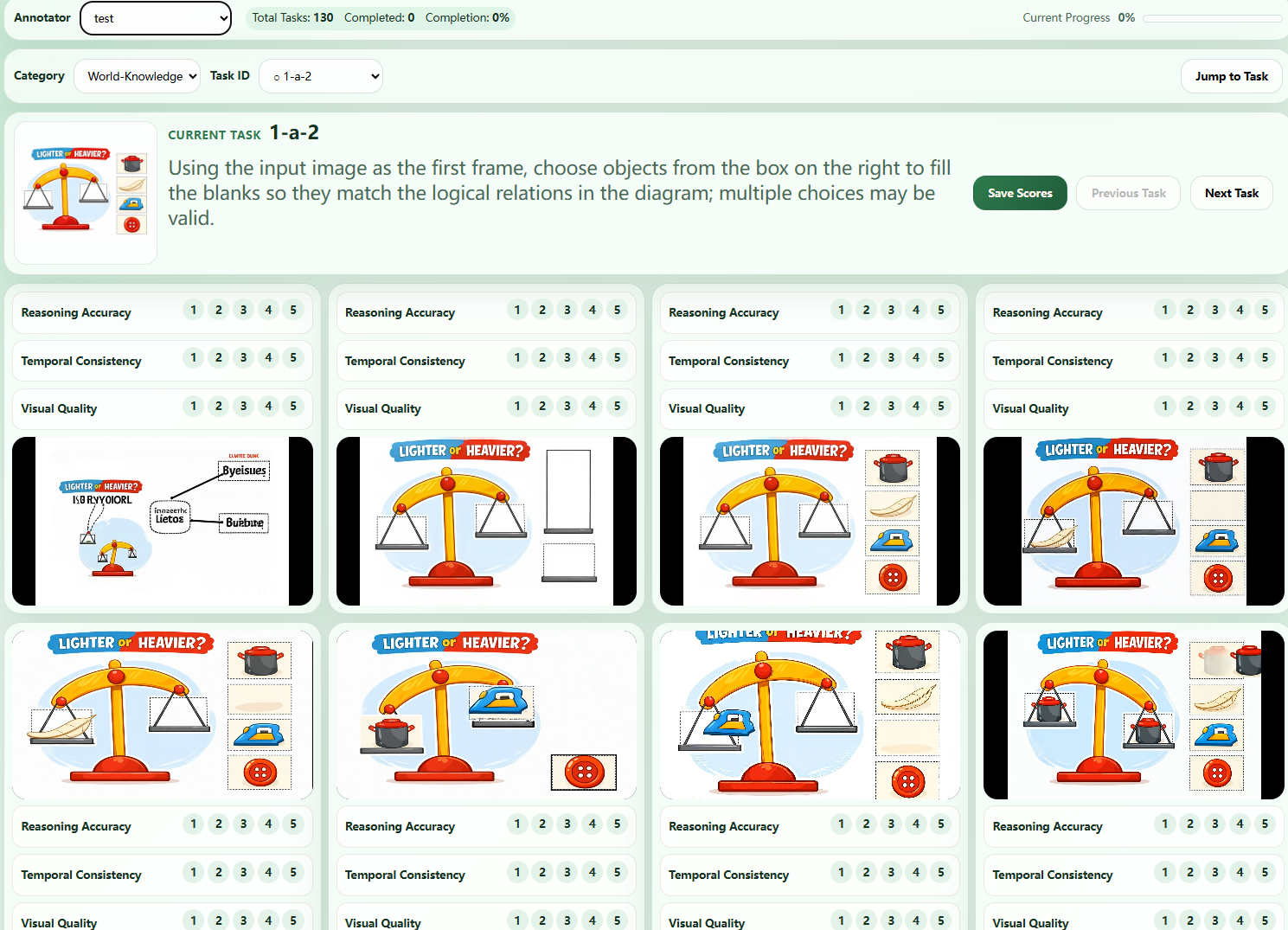

Human-Aligned Stress Testing of Video Generators

as Future World-State Predictors

Can a video generator reason about how the world should evolve — not just render it? 436 cases 4 reasoning dimensions 11 generators ~6K expert preference pairs